A month ago, I got a pre-briefing on Microsoft’s Azure Machine Learning with Roger Barga (group program manager, machine learning) and Joseph Sirosh (CVP, Machine Learning). Yesterday, Microsoft made it available to customers and partners, so now seems like the right time to talk about how it fits into the broader market.

The TL;DR is that I’m quite impressed by the story and demo Microsoft showed around machine learning. They’ve paid attention to the need for simplicity while enabling the flexibility that any serious developer or data scientist will want.

Here’s an example of a slide from their briefing, which obviously resonates with us here at RedMonk:

For example, we constantly hear about toolsets like Apache Mahout (for Hadoop) that it’s more of a prototype than anything you can actually put into production. You need to have a deep knowledge of machine learning to get things up and running, whereas Microsoft’s making the effort to curate solid algorithms. This makes for a nice overlap between Microsoft product and research, the latter of which has some outstanding examples of machine learning (such as the real-time translation from English to Chinese in late 2012 by Rick Rashid).

In action, Azure ML looks a lot like Yahoo Pipes for data science. You plug in sources and sinks, without thinking too much about how that all happens. The main expertise needed seems to be around two areas

- (Largely glossed over) Cleaning the data before working with it

- Choosing an algorithm that makes sense given your data and assumptions

Both of these require expertise in machine learning, and I’m not yet sure how Microsoft plans to get around that. Their target market, as described to me, is “emerging data scientists” coming out of universities and bootcamps. Somewhere between the experts and the data analysts who spend all day long doing SQL queries and data modeling. Some comparisons of data against various distributions to check the best fit and whether that suits the chosen algorithm would be one approach; another would be preference of nonparametric algorithms.

Here’s a screenshot of a pipeline:

From my point of view, a critical feature to any pipeline like this is flexibility. Microsoft’s never going to provide every algorithm of interest. The best they can hope for is to get the 80% of common use cases; however there’s no guarantee that even the 80% is the same 80% across every customer and use case. That’s why flexibility is vital to tools like this, even when they’re trying to democratize a complex problem domain.

That’s why I was thrilled to hear them describe the flexibility in the platform:

- You can create custom data ingress/egress modules

- You can apply arbitrary R operations for data transformation

- You can upload custom R packages

- You can eventually productionize models through the machine-learning API

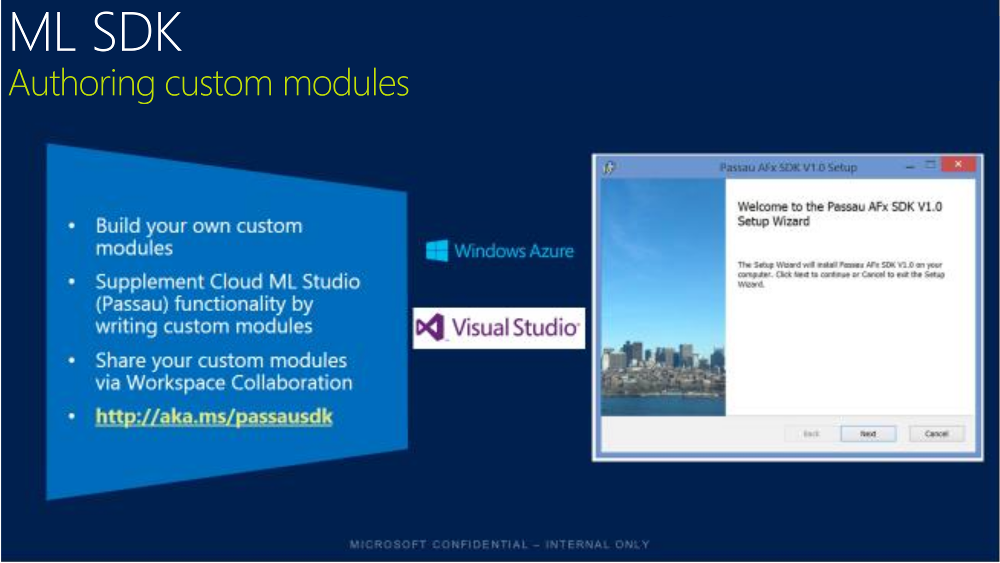

All of this, except for the one-off R operations, will rely on the machine-learning SDK:

Much like higher-level AWS services such as Elastic Beanstalk, you don’t pay for the stack, you pay for the underlying resources consumed. In other words, you don’t pay to set up the job, you pay when you click run.

Microsoft’s got a solid product offering here. They need to figure out how to tell the right stories to the right audiences about ease of use and flexibility, build broader appeal to both forward-leaning and enterprise audiences, and continue to focus on constructing a larger data-science offering on Azure and on Windows (including partners like Hortonworks). They also need to continue reaching toward openness, as they’ve shown with things like Linux IaaS support and Node.js support. One example would be Python, an increasingly popular language for data science.

Disclosure: Microsoft and AWS have been clients. Hortonworks is not.

No Comments