The asymmetry of open source technologies’ ability to penetrate the enterprise datacenter is not difficult to understand. Besides questions of maturity, the fact is that different product categories carry different risk profiles, a major factor for enterprises more afraid of making the wrong choice than interested in the right one. Red Hat’s sustained growth, for example, indicates that operating systems are experiencing minimal friction in terms of adoption. Non-relational databases, on the other hand, while widely used aren’t trusted by corporate buyers yet in quite the same way, with Hadoop a notable exception.

One area that hasn’t endured the same level of skepticism is open source configuration management software. While there are many options, system adminstrators and developers are advantaging Chef and Puppet at the expense of competing solutions. But with that success comes an obvious question: which do I pick, and why?

Although discussions of the platforms’ relative technical merits can be interesting – the comments on this HN thread display the usual range of opinions on the subject – we’re typically more interested in usage patterns. Quality of implementation is an important consideration in technology selection, but history demonstrates adequately that technically inferior solutions can and often do outperform competitors. Because there is no single canonical source for usage, we instead examine a variety of proxy metrics, looking for patterns that indicate a broader narrative at work. Here’s a non-comprehensive run through some of the metrics that we regularly evaluate.

Debian

Update: Spoke with Opscode’s Jesse Robbins who wanted me to be aware that Chef’s under-representation on Debian is likely due in part to the fact that they run and manage their own repositories, but also because they recommend deployment via the RubyGems package manager over Debian’s apt-get. So consider yourselves caveated; the charts otherwise remain untouched.

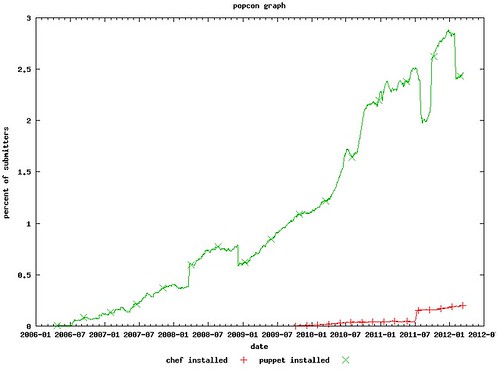

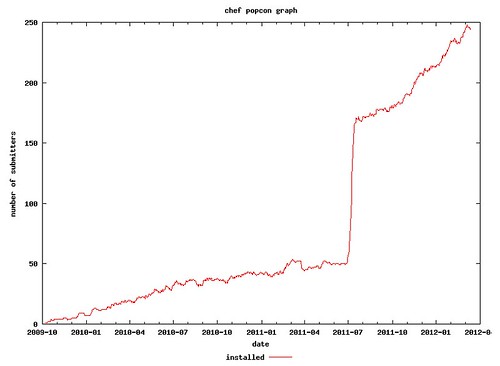

Each running instance of the Linux distribution Debian has the ability to phone home telemetry of the packages installed on the system. Called Popularity Contest, this provides insight into what the relative adoption rates of various software packages are within the subset of the Debian community that has elected to self-report application information. This graph, then, reflects adoption of Chef relative to Puppet within the Debian community.

As you can see, Puppet substantially outperforms Chef in this context. Part of this is the fact that Puppet is by four years the older project, and thus has had additional years to build adoption numbers. But if we look more closely at the data, however, there are indications that adoption may also be a function of packaging issues. In mid-July of last year, Opscode (the company behind Chef) made updated packages available for Debian and related distributions. Almost immediately thereafter, according to Debian Popularity Contest data, adoption spiked on that platform.

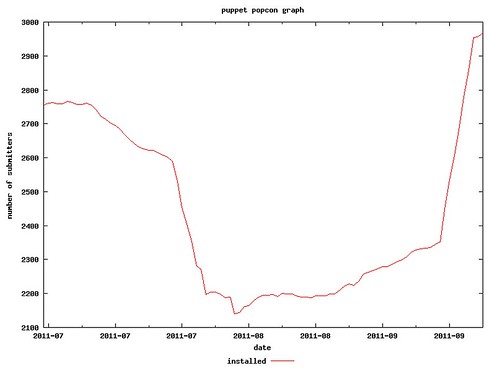

More interestingly, the adoption of Chef enabled by the new packages may have led to a transient decline in reported Puppet adoption. If we examine a three month period of Puppet adoption beginning in July, the impact to the overall trajectory is apparent.

From a macro perspective, the data indicates Puppet still remains more broadly adopted within the Debian community than Chef. But Chef is growing, and the evidence does seem to confirm growth for one is negatively correlated with growth from the other.

GitHub

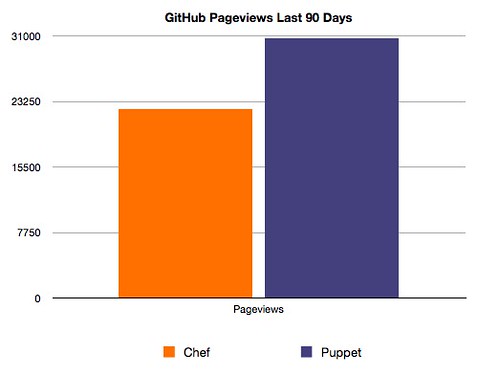

As one of the largest and most important developer communities in existence, we track individual project performance on GitHub closely. With author backed repositories for both Chef and Puppet available, it’s possible to compare the performance of the two projects in basic fashion. GitHub gives a slight edge to Puppet in terms of total contributors; 121-118. Puppet also saw more pageviews on GitHub over the past 90 days, 30735 to 22361.

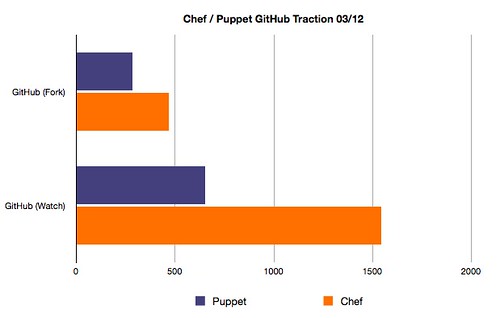

But in metrics relating to explicit interest in the project – specifically the numbers of forks and watchers per project – Chef outperformed Puppet.

The results from GitHub then, are relatively inconclusive. Implicit metrics like pageviews point one way, explitic metrics like forks another. There is no clear winner of this category.

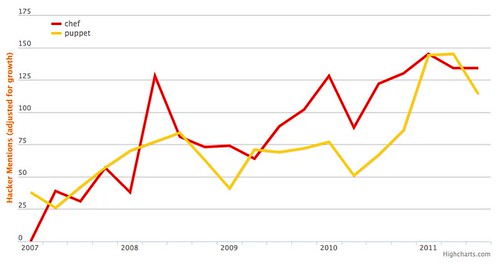

Hacker News

On Hacker News, neither Chef nor Puppet are dominant from a discussion perspective. Mentions of one tend to closely track discussion of its counterpart, in fact, according the Hacker News search APIs.

The correlation is unsurprising except for the timeframe; Chef shows substantial traction in discussion on Hacker News well in advance of its 2009 availability. This suggests artifacts in the returned data, because mailing list traffic archives only date back to 2009.

Shared Scripts

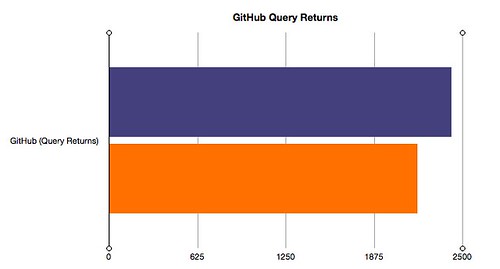

One of the concepts shared by both Chef and Puppet is scripts that implement common patterns. In Chef, these are called cookbooks, for Puppet users, modules. While it’s impossible to effectively measure the number of scripts per project – their library size, so to speak – we can attempt to evaluate first how many each company hosts themselves. Second, we may attempt to imperfectly infer community size by comparing query returns for both projects on GitHub, as it is, not surprisingly, common practice to host cookbooks and modules on the site.

For the former, here are the returns. As a side note for those interested, these results were obtained by Puppet’s forge site and Opcode’s Cookbook Site API, respectively.

As you can see, Opscode currently hosts approximately thirty percent more scripts than does Puppet Labs, though to what extent either vendor is focusing their attention on these sites versus their efforts on GitHub is not entirely clear. Comparing general GitHub query results, meanwhile, we see that the lead has changed hands.

As measured by general GitHub queries, Puppet enjoys a slight (~10%) advantage in returns over Chef. It’s a rough metric because it includes all matching repos, but as a broad indicator of traction it has some utility.

The Gist

Ultimately, the data we’ve looked at – aside from the Debian usage information – doesn’t prove the case for either platform. Puppet backers can take heart in its dominance on Debian as well as its GitHub pageview lead and repo traction. Chef advocates, on the other hand, may take comfort in the fact that a project that’s four year younger is outperforming more mature competition in some metrics. For our part, we have (full disclosure) worked with and admire both companies.

And while there is understandably friction between the two communities at times given the functional overlap, it is likely that there’s more than enough oxygen to support both projects indefinitely. Apart from the various community numbers discussed above, both can point to impressive customer rosters and partner bases. Given the opportunity size and scope, however, as well as the legitimate traction behind both projects, the ultimate leadership role for the category may not be who can create the best software but who can best leverage the data it generates. With software valuations in decline, data should increasingly be a product focus.

In the meantime, it will be interesting to watch these projects compete with each other moving forward.

Afterword: In case anyone’s curious, we did look at StackOverflow metrics as well, but the differences were slight enough that we omitted them from the above.

Matt Asay says:

March 13, 2012 at 10:15 pm

I’ve heard it said that Puppet tends to be used by those with lots of machines already in place and in need of configuration management, whereas Chef tends to get used in greenfield opportunities. So Chef tends to get used by new school startups, while Puppet is used by enterprises with lots of systems. I don’t have a huge personal data set to determine the veracity of this, but in my (admittedly slight) experience this distinction seems to hold.

Matt Asay says:

March 13, 2012 at 10:16 pm

I’d love to hear any confirming or contradictory evidence, and maybe particularly how prevalent people see Puppet and Chef being used in cloud deployments.

Jesse Charbneau says:

September 24, 2012 at 6:45 pm

I can say that for the systems I manage, we have easily hosted in the cloud with puppet. Our approach hinged on version 2.7.11 and above, where we able to leverage using facter data (ec2_security_groups) and then built modules that supported a “group” of machines, rather than denoting each host specifically. Match that up with boto and fabric, and we were able to do pretty much anything needed with cloud (AWS specifically in our case – but I imagine the approach could be applied to other cloud providers as well).

Jesse Robbins says:

March 13, 2012 at 11:51 pm

Stephen,

Great post! A few notes about the data:

The Debian data you include here is inaccurate for Chef because Debian & Ubuntu users install Chef via Opscode’s own apt-get repository and/or via RubyGems.

RubyGems provides a wealth of public stats. The Chef Gem alone has been downloaded 678,891 times as of March 13, 2012.

(Puppet also has a Gem which has been downloaded 174,752 times, although they have many download options.)

No matter what tool people choose or how they get it… it is awesome to know we’re helping so many sysadmins, engineers, and developers get awesome stuff done.

I’m proud to be a part of it.

-Jesse Robbins

Cofounder & Chief Community Officer

Opscode – Creators of Chef 😉

Walter Heck says:

March 14, 2012 at 4:11 am

Just for the record, puppet has it’s own apt repo’s as well, which are often more up to date then the debian ones 🙂

To the author: great article, interesting statistics!

Luke Kanies says:

March 14, 2012 at 12:37 am

(I’m the founder of Puppet and CEO of Puppet Labs, so I’m clearly biased.)

Note that Puppet is built for sysadmins, not for developers, and most of the github stats are developer-oriented. I’m not surprised to find more parity there, but those developer stats are a small portion of the actual community, and the Debian stats are representative of the wider community. Given how many platforms Puppet runs on relative to Chef, and Chef runs best on Debian and Ubuntu, if Puppet has this much more adoption in those platforms, imagine what it’s like on the platforms Chef doesn’t support well, like Red Hat and OS X.

More useful stats would be to look at things like the user community, where Puppet has 3800 people on our mailing list and Chef has 770. This is a direct reflection of involvement, rather than the indirect github stats.

And correlating the drop in Puppet usage with the gain in Chef usage doesn’t make sense – Chef went from 50 installs to 200, but Puppet apparently dropped by 600. It seems highly unlikely that these changes are anything but coincidence, given the large multiples involved. E.g., we announced our commercial product right around the time this dip started, and we released it right around the time it ended. This is a far more likely cause for the change than any outside factor, especially in such a smaller community.

So, it looks to me like Chef is getting what they want (ruby developers) and we’re getting what we want: Sysadmins managing large-scale infrastructure.

Links 14/3/2012: GIMP 2.7.5, Microsoft Has Massive RDP Hole | Techrights says:

March 14, 2012 at 9:52 pm

[…] Community Metrics: Comparing Chef and Puppet […]

Jesse Robbins says:

March 26, 2012 at 3:41 pm

Hey Stephen, quick update… Opscode just announced that as of March 2012:

* Chef has been downloaded over 800,000 since 2010!

* Our community grew to over 13,000 registered users

* Over 400 community cookbooks for everything from Apache to Zabbix.

* Over 600 individuals developers and 100 organizations are contributors to Open Source Chef!

(See the full announcement here: http://www.opscode.com/blog/2012/03/26/opscode-announces-1950000-funding-hiring-community-growth-and-chefconf/)

» Growing on trees says:

April 10, 2012 at 8:59 pm

[…] Do You Regrow a Rainforest? Willie Smits Knows (treehugger.com) Image via Wikipedia A profitable rainforest A MOST unusual document landed on your correspondent’…"300" height="215" />Image via […]