Among the macro technology trends that we’re following at the moment – things like the conflation of duties that is dev/ops, the emergence of open source as a mainstream development mechanism, and the accelerating value of data – there is perhaps none more important than this: computing is getting easier. Quickly.

Remember the old days? When building an application meant procuring hardware, finding rack space for it, provisioning networking, downloading (if you were lucky) and installing software, and building your application from scratch? Well, those days aren’t exactly the past right now, but they are most certainly not the future.

That this shift is not more widely acknowledged is a function of the number of moving pieces involved. From the advent of cloud computing (coverage) to the rising popularity of development frameworks (coverage), there are a variety of reasons that development is easier today than it has ever been. True, this need for speed is nothing more than part of a longer term trend. From Assembler to Java to Python, the evolution of programming languages is a more than adequate illustration of computer science’s fundamental recognition that time to value is crucially important.

But what’s different today is the lack of friction to computing, in virtually every area.

Applications

Application installation is simpler today than it once was, yes. Any consumer can tell you that. But for consumers, application installation hasn’t really been all that difficult to begin with. Inefficient, yes, but not too trying: download an .exe or a .dmg, double-click it and you’re off to the races.

Application discovery, on the other hand, has posed a significant challenge for users. Say that you want a Sudoku game: where might you get one? Today, the answer for more and more users is a marketplace. Apple’s proven the concept with a few billion applications downloaded, and that model will undoubtedly be replicated on both Linux and Windows. Marketplaces are materially reducing the friction in application discovery, installation, updating and purchase.

But enterprise applications are a different beast altogether from consumer applications, you argue. Marketplaces lack the same relevance in that market. Well, it is certainly true that the model is far from proven in the enterprise, but that’s not stopping vendors who perceive the opportunity (coverage).

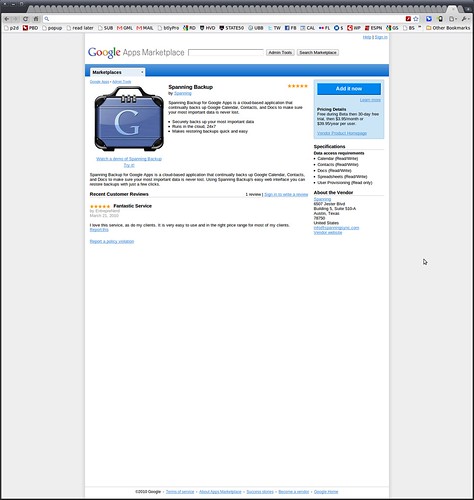

One of the primary limitations to enterprise marketplaces has been application compatibility and integration. Most enterprise applications require implementation and integration expertise, making them less suitable for a marketplace. Or at least those marketplaces that lack professional services resources, unlike, say, the Google Apps Marketplace or Microsoft Pinpoint. But what if the platform is vendor controlled, however, and implemented as a network application? Then things look very different indeed.

Consider the likes of Heroku or the Apps Marketplace. Both offer enterprise level applications for free and for purchase, with push-button integration. On Heroku and want to add memcached support? You can do it from the command line: heroku addons:add memcached. Want to add backup to your Google Apps account? Click the button below.

Not every application will be acquired via a marketplace, of course. But the trendline is clear: more and more applications will be.

Code

As with applications, the discovery and utilization of code has been greatly simplified via mechanisms such as Github (coverage). No longer are code repositories glorified web aware filesystems; they are now centers of social activity and collaborative development.

Leveraging the inherent comfort of the underlying distributed version control tooling with differences, repos like Github or Gitorious act to encourage forking, which in turn accelerates the pace of development through parallelization. Github can in that respect be thought of as the multi-core alternative to single threaded alternatives such as Google Code or SourceForge.

The friction involved in discovering and obtaining code at present is effectively zero. That’s nice. The effort involved in forking and contributing back to the original codebase is near zero. That is really impressive.

Add in the fact that web companies such as Facebook, LinkedIn and Twitter are increasingly leveraging open source as a primary, mainstream development path (coverage) – spiking the volume of available code – and there has never been a better time to be a developer than today.

Data

We’ve been predicting at RedMonk for a while now that we would see data emerge not only as an asset, but an asset worthy of its own marketplaces. Between startups like the aptly named Data Marketplace, Factual and Infochimps, as well as fledgling enterprise efforts like SAP’s Information on Demand and Microsoft’s Project Dallas or even public datasets such as those from Amazon or Google, this prophecy is clearly on its way to being fulfilled.

So what’s next? API’s, of course. API’s like Infochimps‘. Why? Because it’s all about lowering friction, remember. And there is very little that’s lower friction than an API.

There’s a reason Cloudera’s recent contribution Flume was a trending repo on Github: the need to collect, aggregate and move data has never been greater.

Most web services today serve up some portion of their data via API’s. But marketplaces will likely find a role to play with value-added API’s. Recombining feeds for additional value, introducing custom analytics, or merely serving as a commercial front-end for higher value streams. Data marketplaces therefore will in future include not just static datasets like census returns or weather metrics, but API-driven access to real-time datasets, so that it can be analyzed just as it comes off the wire.

Hardware

Even in 2006 (coverage), it was clear that Amazon Web Services were something new and different. And it’s no secret that the market – Amazon included – has come a long way since then. The market for cloud services, which no one besides Amazon took seriously as recently as 2008 according to Ray Ozzie, has just exploded.

So much so that virtually every vendor on the planet is rushing to get to market with cloud services, to the extent that cloud washing – the marketing of regular products and services as “cloud” enabled – is now a serious problem for potential customers.

Why the excitement? Because the cloud makes things easier. Is the technology behind it new? That’s debateable. But the lack of barriers to entry is very new, time sharing notwithstanding: that much is indisputable. No longer do startups have to over provision themselves with development, testing and production environments in advance of a realistic idea of what their addressable market is. With the cloud, they can merely rent the hardware they need, when they need it, and turn it off when they’re done.

It’s a little bit sad that such a basic concept seems revolutionary, but it is. Up front licensing and payment has been the rule, not least because it maximizes the revenue extraction by realizing the entire capital expense immediately. Contrast that with the reality today, where everything from hardware to storage is now available online either free or via traditional online payment mechanisms, and it’s clear that expectations of hardware providers have changed dramatically in a relatively short span of time.

The Net

The technology industry has a well earned reputation for innovation. But industry innovation has often arrived in spite of, rather than because of, the available technology. Applications and code, hard to work with, impossible to find. Data has been inaccessible. Hardware has been too expensive, too difficult to get, or both. And so on.

What’s interesting about the current wave of frictionless computing is not that these are improving, but that they are all improving at the same time. While dramatic improvements in ease of use can come at the cost of some commercial opportunities – think the integration of a firewall and virus scanning functionality into Windows, for example – in this case, the commercial opportunities created should far exceed those lost. For those seeking to compete moving forward, the lesson here is simple: focus on the friction. Reduce the barriers to entry for users, developers and customers, because if you don’t, your competitors will be. If you’re not easy to consume, you may as well not be consumable at all.

Too often, we in the technology industry myopically focus on the perceived merits of a given technology, when its success or failure is more likely to be dependent on its availability. As more assets become more available, count on this means of evaluation giving way to a more nuanced view. The best technology is nice to have. But the easiest technology to get is, in the end, more likely to win.

Recent Comments