Thanks to a combination of market factors, but principally increased competition, there is no technology market where prices are moving more quickly than cloud infrastructure. After completing an initial survey and deconstruction of IaaS pricing trends in August of last year, in fact, follow ups proved difficult because prices dropped so frequently that an analysis was frequently obsolete before it was even published. As was the case last week, when Google at once announced the General Availability of its Cloud Compute Engine (GCE) as well as significant price drops – the day after the original analysis had been re-run.

A week later with no corresponding price drops from competitive providers, the decision was made to publish this before the inevitable arrives. As a reminder, this analysis is intended not as a literal expression of cost per service; this is not, in other words, an attempt to estimate the actual component costs for compute, disk, and memory per provider. Such numbers would be speculative and unreliable, relying as they would on non-public information, but also of limited utility for users. Instead, this analysis compares base hourly instance costs against the individual service offerings. What this attempts to highlight is how providers may be attempting to differentiate by prioritizing memory over compute capacity, as one example. In other words, it’s an attempt to answer the question: for a given hourly cost, who’s offering the most compute, disk or memory?

As with the previous iteration, a link to the aggregated dataset is provided below, both for fact checking and to enable others to perform their own analyses, expand the scope of surveyed providers or both.

Before we continue, a few notes.

Assumptions

- No special pricing programs (beta, etc)

- Linux operating system, no OS premium

- Charts are based on price per hour costs (i.e. no reserved instances)

- Standard packages only considered (i.e. no high memory, etc)

- Where not otherwise specified, the number of virtual cores is assumed to equal available compute units

Objections & Responses

- “This isn’t an apples to apples comparison“: This is true. The providers do not make that possible.

- “These are list prices – many customers don’t pay list prices“: This is also true. Many customers do, however. But in general, take this for what it’s worth as an evaluation of posted list prices.

- “This does not take bandwidth and other costs into account“: Correct, this analysis is server only – no bandwidth or storage costs are included. Those will be examined in a future update.

- “This survey doesn’t include [provider X]“: The link to the dataset is below. You are encouraged to fork it.

Other Notes

- HP’s 4XL (60 cores) and 8XL (103 cores) instances were omitted from this survey intentionally for being twice as large and better than three times as large, respectively, as the next largest instances. While we can’t compare apples to apples, those instances were considered outliers in this sample. Feel free to add them back and re-run using the dataset below.

- Microsoft Azure’s “Extra Small” instance, which lists its core count as “Shared,” has been represented as .5 of a core for this analysis. If a better estimation is available, we’ll include it.

- All of Google and Microsoft’s instances and two of Amazon’s are omitted from the disk cost comparison because they do not include a fixed disk amount per instance.

- Versus the original analysis, IBM’s offerings have been replaced by Softlayer’s, by reason of that acquisition.

- While we’ve had numerous requests to add providers, and will undoubtedly add some in future, the original dataset – with the above exception – has been maintained for the sake of comparison.

How to Read the Charts

- There was some confusion last time concerning the charts and how they should be read. The simplest explanation is that the steeper the slope, the better the pricing from a user perspective. The more quickly cores, disk and memory are added relative to cost, the less a user has to pay for a given asset.

With that, here is the chart depicting the cost of disk space relative to the price per hour.

As mentioned, two of Amazon’s instances (which are EBS only) and all of Google and Microsoft’s are omitted from this chart because they do not include fixed disk price. That said, of the remaining providers, it’s interesting to see that Amazon remains the most aggressive in terms of the disk space made available. Even granting their economy-of-scale cost advantages versus some of the competitors here, with the cost of disk falling it’s interesting that no other players besides Joyent have been more willing to challenge Amazon on this front. Whether this signals a lack of ambition on the part of AWS competitors or a lack of interest from the market in disk versus memory and compute capacity is unclear, but it seems probable that it’s a combination of both.

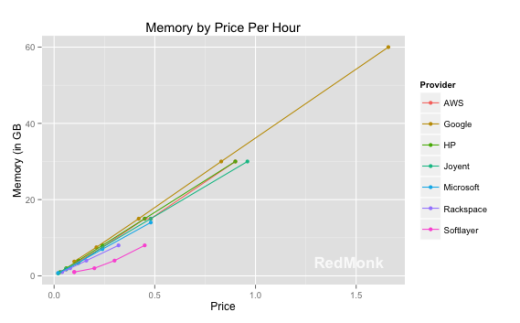

If these charts are intended to expose prioritization from a feature perspective, meanwhile, the plot of memory capacity versus hourly cost is potentially the most revealing.

Several things become obvious from this chart.

- The high correlation in available memory/hourly costs indicates a shared understanding of the importance of memory pricing.

- Google is the most aggressive in terms of the memory per hourly unit of cost.

- HP is apparently pegging itself to AWS.

- Softlayer and, to a lesser extent, Rackspace, are likely to be less competitive for memory focused buyers.

Lastly, we have a chart of the available compute units relative to the hourly cost.

Clearly signalling its intent to compete with AWS is HP, which matched its pricing on relative available memory costs and betters it in available compute units. Rackspace is more competitive within compute than memory, and AWS – though the dominant market player – is still among the most aggressive in terms of pricing per compute unit. Softlayer and Microsoft’s Azure form the middle of the pack, with Google conspicuously less competitive in terms of available compute units as a function of hourly cost. This is a marked shift from our prior analysis, which had Google among the leaders in terms of compute per hourly cost.

A few overall takeaways, with the reminder that this is merely a survey of standard instances:

- Amazon remains the standard against which other programs are judged and/or judging themselves. In virtually every category, Amazon is amongst the leaders, with competitive providers looking to advantage themselves by either matching or exceeding the price/component offered by Amazon.

- Intentionally or not, providers are signaling their prioritizations from an infrastructure perspective. HP, for example, is clearly attempting to separate itself from the pack on the basis of compute value for the dollar (not to mention instance sizes), while Google is doing the same for memory. Disk, meanwhile, seems to be something of an afterthought, with multiple providers not including it as a standard portion of the offering and no one attempting to outcompete AWS as they are in compute and memory, save perhaps Joyent.

- Given the aforementioned prioritization, it will be interesting to observe its impacts moving forward. Amazon is essentially pursuing a leader’s course: highly competitive by each factor, but not necessarily obsessed with being the absolute leader in each. Two obvious competitive strategies emerge from the above deconstruction. The first, exemplied best by Microsoft here, is a middle of the road value proposition, never the most expensive but never the least. The second appears to be a weighted bet, sacrificing performance in one category (e.g. Google with compute) to achieve leadership in another (Google in memory).

- Besides the implications for users, it will be interesting to monitor how a given vendor’s price prioritization may vary over time, based both on customer demands as well as internal resource availability and costs. Google and HP’s strategies, as but two examples, certainly appear to have evolved since the last snapshot.

In the next iteration of our cloud pricing research, we’ll explore how pricing has changed over time across the surveyed vendors collectively. In the meantime, here is a link to the dataset used in the above analysis.

Disclosure: Amazon Web Services, IBM (Softlayer), and Rackspace are RedMonk customers. Google, HP, Joyent and Microsoft are not current customers.