TL; DR – Google is making a lot of progress on a number of fronts, with some extremely interesting and ground breaking technology. But the enterprise umph and focus is still just developing.

I had the opportunity to spend time at GCP Next, Google Cloud Platforms main event, in San Francisco back in mid March.

Before we start to talk about the Google event, we should step back and look at two overall trends in the industry. Firstly, as I have said before, there are really two operating systems in the world, cloud and mobile, everything else is just an implementation detail. Some incredibly interesting, and strategically significant details, but implementation details none the less.

Secondly, life in the cloud is all about workloads and data. Your first goal is to get workloads into your cloud, your second is to build further customer stickiness and loyalty from those workloads by providing significant value in other areas. Google understand the workloads message, but their focus at this event was on providing value in other areas. As I return to at the end of the post this provides a very mixed message.

Security, Security, Security

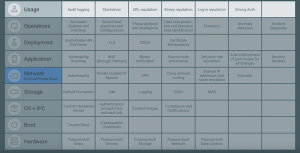

One of the major overarching themes of the entire GCP Next event was security. In the case of security the Google story is one of the most complete in the market. From a developer and infrastructure viewpoint Google Distinguished Engineer Neils Provos talked, and this term is important, to how Google can “pervasively secure you, your data and your customers data and your applications” on Googles infrastructure.

In an era when most large organisations still practice the hard shell, soft core model of security a focus on securing everything across your infrastructure from the outset is important. For many organisations security is, sadly, still an afterthought. If you can easily leverage a set of capabilities as part of a wider offering, that just make things more secure by default, well why wouldn’t you?

The capabilities matrix presented by Neils is very impressive, ranging from datacenter access to hardware all the way through to operations and application usage.

As the matrix was completed during Neils talk, my mind kept jumping back to a presentation I attended several years ago from Gus Hunt, former CTO of the CIA. During the talk Gus repeatedly made the point the for the cloud providers security has to be a given – it is table stakes.

More importantly Gus pointed out it is not when you will be hacked, it is how, and how quickly you can react. Having a team of dedicated security engineers with massive scale automation and threat detection in place is beyond the reach of must organisations. However, being able to leverage the internal expertise that Google have developed over the years – dealing with everyone from script kiddies to nation state actors – is a huge win if you are on their infrastructure.

As my colleague James has already pointed out

Frankly any enterprise that calls BAE or Raytheon before it looks to Google about how to better secure information assets in the Cloud era is doing it wrong.

Overall the security story that Google is telling is compelling, and very hard to argue with. Neils hinted at some additional operational tooling that may be in the pipeline providing integrated views into deployments combining auditing and vulnerability scanning, but details were sketchy.

Among the key areas highlighted by Google that developers and enterprises should look further into are the ideas around source code provenance, leveraging StackDriver for security operations and the container management functionality that is being developed in GCE.

Kubernetes, Containers and Configuration

You do have to love when someone gets up on stage and describes their job as “Whats next”, which is how Eric Brewer described his role as he took to the stage to talk about Kubernetes, deployment and all things container related.

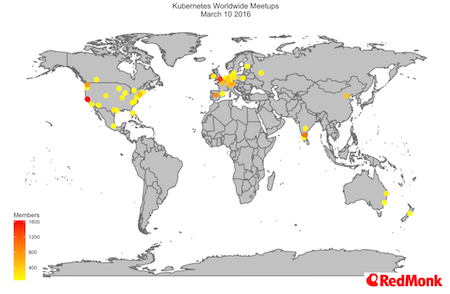

Google are very keen to talk about the velocity of the Kubernetes community, and there is no doubt that it is growing at an incredible rate, from both the opensource and commercial aspects. Here at RedMonk we are watching the emergence of Kubernetes very closely and we have already spent quite a bit of time looking at the size and composition of the Kubernetes community. You can read some of our past research here, here and here.

The other message that came through very strongly across the entire event, and in particular during Eric’s presentation, is how Google want to help Kubernetes become the defacto way to run containers. The key line here was during Eric’s presentation when he showed a slide stating “Docker makes containers accessible, Kubernetes makes containers production ready”.

This, and let us pull no punches here, is a very strong and pointed statement which illustrates where Googles thinking on the wider container ecosystem is.

Configuration, Configuration, Configuration

Anyone that has maintained a large scale application will tell you that configuration and deployment is almost always one of the most problematic areas. Personally I am more than familiar with the trials and tribulations of deployment scripts and DSLs, having used everything from Jumpstart to Chef, Ansible, Puppet and the like, to a host of home grown solutions over the years.

For new applications we are moving into a world of immutable infrastructure, and containers absolutely give us this on the build level. Deployment and configuration is, however, far from trivial.

The approach being taken to the deployment problem by Google, via Google Cloud Deployment Manager for their own platform, and with Helm, contributed by Deis (now part of Engine Yard) in kubernetes is very interesting.

At a high level, the idea is to separate construction and deployment. This approach allows you to confirm, validate, and most importantly store, the configuration you are going to use before starting an actual deployment. The key things to take away here, however, are the simplicity of the approach (you are using YAML as your DSL) and the ease of both encapsulation and composition for more complex environments.

The overall approach looks very, very promising. If this promise is truly delivered on the trajectory for Kubernetes will only increase. We are looking forward to seeing how it is adopted over the coming months in both Kubernetes and via Deployment manager.

Machine Learning

The big product announcement on the first day of GCP Next was Googles ‘Cloud Machine Learning’ offering. This offering is still in an early access mode, but combines a number of existing product lines which have been available in different guises since 2011 with some new offerings under an overall product umbrella.

The deep learning story is impressive, and is something which I will return to in a future post, but for most enterprise customers leveraging deep learning is still an aspiration, and there are multiple other areas and problems they need to cover first.

DataCentres, People and Culture

Google have been going to great lengths to talk up both the size of their datacenter’s and how they approach managing them. Now it is easy to nerd out on the hardware, the buildings, the geographic spread and the overall approach, but the really interesting parts of this talk were elsewhere.

It is particularly important to call out Joe Kava’s thoughts on the importance of having the right employees. We have recently commented on the end of big outsourcing, and the shifts that we see towards companies bring strategic functions back in house. For Google, their datacenter’s are a key strategic component, and Joe was keen to highlight the fact that only Google badged employees work in them.

In conjunction with this the importance of having a strong, trust driven, culture was emphasised. The focus is on learning from failures, and continuously improving, not blame. Now this is similar to everything we know about Amazons approach as well, and is an approach all of the cloud native companies fully understand. Enterprises would do well to try and learn from the example being set.

Datacentre Sustainability

Google deserve a lot of kudos for being blunt and upfront about how much importance they place upon sustainability.

On a personal level I have a four-month old son. While some companies and organisations may not care about sustainability, I know I do, more so now than ever before. The fact that Google, along with Microsoft, Amazon and Apple are willing to come together to force sustainability onto the agenda is something all of them should be applauded for.

If you are selecting cloud infrastructure, include sustainability in your criteria – and make the vendors, and all the analysts you talk to, aware of this. We only have one climate.

Enterprise Readiness

Google were very clean to play up their enterprise readiness, the idea that they will come and meet firms halfway and so forth. We find it very interesting that they are keen to highlight very traditional corporate partners, such as PWC, whom we heard from during the analyst sessions, and Accenture. This is straight out of the original VMWare playbook.

The addition of Veritas as a partner is also very interesting, and is an area I look forward to watching develop. Whatever the viewpoint from a cloud native perspective, Veritas are in a lot of enterprise accounts with their NetBackup offering, and giving these enterprise users an easy on-ramp to Googles Cloud Storage Nearline offering is an astue move, and one we would expect to see gaining some traction.

Beta, Beta Everywhere

Culturally Google still perennially announces products with Alpha and Beta tags. Bluntly put this is a problem. It may be a running joke for many that Gmail had the longest beta known to mankind, but it is just that, a joke. The product was ready, and in world wide use, long before the beta tag was removed.

If we look through a selection of current product offerings, including products announced at GCP Next, we see an alpha, beta or preview tag attached to products such as

The length of time a product stays in beta is a metric for enterprise buyers. When a service provider is unwilling to make a commitment and state that the offering is production ready, enterprises, rightly or wrongly, are hesitant to take the plunge.

In the world of cloud native, many companies are ready and happy to take the plunge on software in its very early stages. But most enterprises are only starting on this journey. Giving guidance and timelines for how long an offering is expected to be in alpha, beta or preview would go a long way to assuring enterprises that it may be worth taking the plunge.

Missing Pieces

It was disappointing not to hear more on Google Cloud Functions, the new serverless offering that came out back in February. Given how quickly the competition is hotting up in this space, from one competitor last year with AWS Lambda, to now having four major offerings with Azure Functions and IBM OpenWhisk, this was an opportunity missed.

We are looking forward to hearing more in the near future.

Conclusion

Google has some incredibly interesting technology, and some of the brightest minds on the planet working on them. But Google also needs to step back and understand that the enterprise does not play like the hipster kids.

There are many, obvious, growing pains as they learn to focus on the enterprise. As Adrian Cockcroft points out the relentless focus on customers that Amazon achieve during their events was just not in play at GCP Next. The overall run rate is being touted at around $400 Million, which is growth, but there is a long way to go.

Diane Greene knows this, and by all indicators, has the mandate to make the necessary changes. Some of the first non technical steps will be the creation of a serious enterprise sales force and a strong partner strategy. Amazon has beefed their sales force up over the last few years. Microsoft and IBM already have strong sales forces, and in a lot of these accounts Oracle are starting to make their presence around cloud felt.

You can have the best technologies and price competitive offerings, but for a certain type of enterprise customer you need to have someone pounding the pavement and understanding both the business needs and internal politics of the enterprise organisations as well.

Overall though, there are many moves in the right direction, but this is a long journey that Google have chosen to travel on. From my point of view, I look forward to the ride.

Disclosures: Google paid for my T&E. IBM, Oracle, Docker, Engine Yard, Chef, RedHat (owners of Ansible) and Amazon are current RedMonk clients.

No Comments