RedMonk’s London-based conference–Monki Gras–returned this spring after a five year hiatus.

The triumphant return of the event was in thanks to:

- the heroic organizing efforts of James Governor and his events team (including Jessica West, Dan McGeady, and Rob Lowe.)

- the Monki Gras sponsors, without whom the event would not have been possible. Our sincere appreciation to AWS, Civo, Deepset, CNCF, Neo4j, MongoDB, Akamai, Griptape, PagerDuty, Screenly and Betty Junod for supporting Monki Gras.

- our lovely attendees, who created a collaborative and supportive atmosphere

- our thoughtful and engaged speakers

And speaking of speakers: while we didn’t record videos of the talks this year, we did want to recap their excellent content. The conference theme was “Prompting Craft” and we had fantastic contributions from speakers across the industry discussing what it means to develop software in an era of AI tooling.

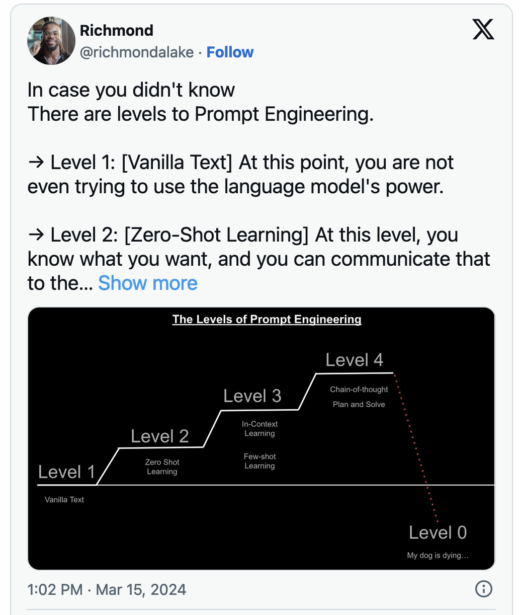

Divine Prompting: Less Is “Truly” More by Richmond Alake

Alake’s talk went meta, drawing parallels between divine creation myths and the evolving techniques in prompt engineering. This talk was a great vibe to start the conference, talking about things on a much grander scale than just the AI trends of the moment (and it was Alake’s first talk, which makes it all the more impressive!)

Suggested references from Alake’s talk include:

- Prompt Compression and Contrastive Conditioning for Controllability and Toxicity Reduction in Language Models

- LLMLingua: Compressing Prompts for Accelerated Inference of Large Language Models

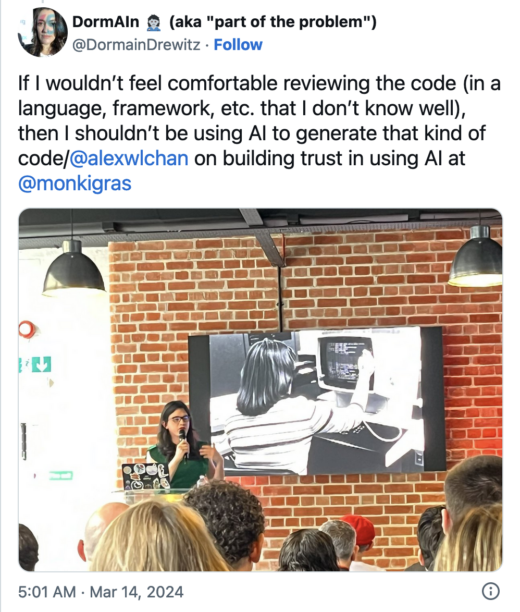

Step… Step… Step… Towards Better Prompts by Alex Chan

In this talk, Chan drew a lovely comparison between learning to dance and using AI tools. In particular I enjoyed their commentary around the importance of trust in both domains. In dancing, you have to have mutual understanding and rapport with your partner before you can do complicated steps; similarly, our experiments with AI tooling needs to be built over time by beginning within our own areas of competence and expertise in order to build our understanding of the capabilities and limits of the tool.

(Also, they demonstrated a live dance step on stage for us!)

Chan’s notes from their talk are available on their blog.

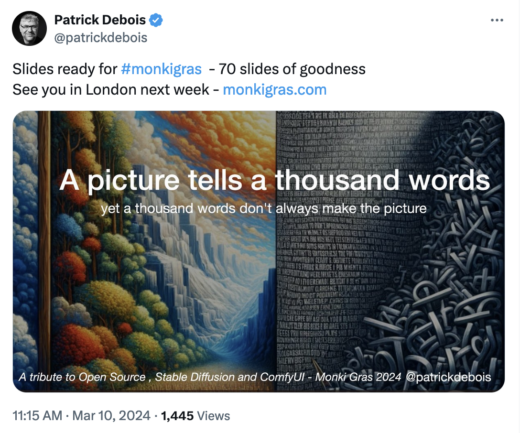

A good picture tells a thousands words – yet a thousand words don’t make a good picture by Patrick Debois

Debois’ talk was a gold mine of information about various tools that can assist with AI image generation. While Stable Diffusion can generate impressive images with a good prompt, augmenting it with other tools can make your outputs much more compelling.

Debois demoed how tools to enhance AI-driven image creation including ComfyUI, Segment Anything, ControlNet, AnimateDiff (among others).

The deck itself was a work of art and we’ll be sure to link to it once it’s available!

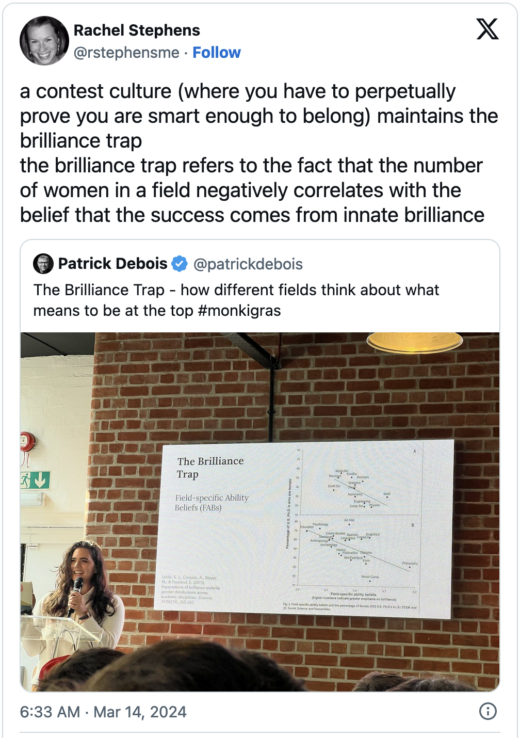

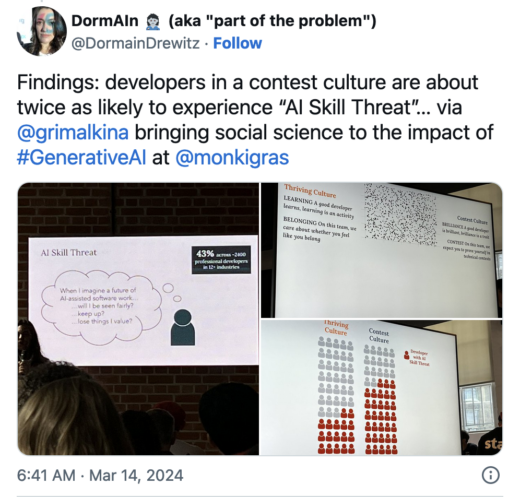

Building for the New Developer: Why the psychological science of software teams unlocks the future by Cat Hicks

The perpetually insightful Dr. Hicks gave a delightful talk about how to enable developer thriving in a world of generative AI coding tools. She delved into the sociocognitive factors of both individuals and teams that are predictive about whether AI will be seen as a threat or an opportunity.

Read more about the team’s research:

- Is AI skill threat part of the new developer experience?

- Generative-AI Adoption Toolkit

- Thriving Blobs – A Developer Success Lab Comic

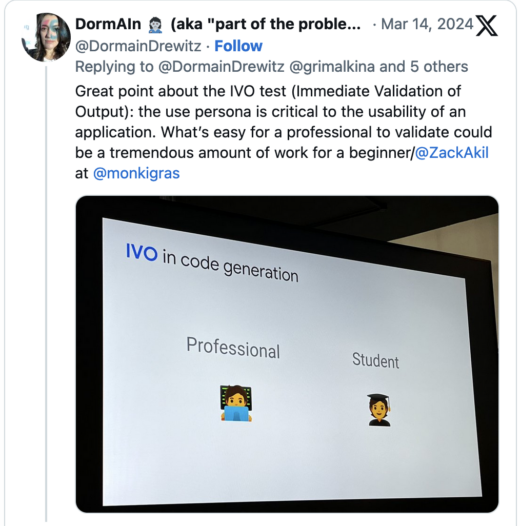

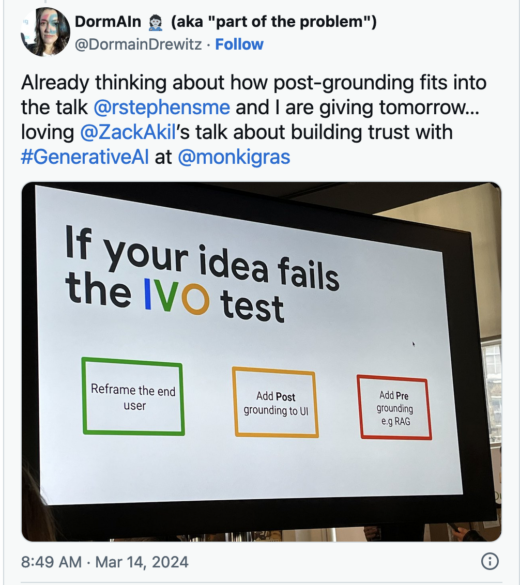

Is your GenAI app a “dud” or “straight to prod”? The IVO test will be the judge by Zack Akil

Akil provided a framework for how to assess which types of applications are good fits for GenAI, knowing that GenAI is prone to hallucinations. The IVO test is: can a user Immediate Validate the generated Output. This means designers of AI applications need to think not only about the tech they are building but who is using it.

Some ways to improve applications if the IVO test fails are post-grounding in UI (e.g. providing resources that make it easy for the user to verify the AI output) or pre-grounding via RAG.

Structure and Agency – A social scientist’s approach to prompting complex systems by Mathis Lucka

Lucka defined AI agents as “models that can make a plan to resolve complex tasks, use tools, and adjust their behavior based on the observed consequences of its actions.” That sounds a bit like politics, doesn’t it? Lucka even reference Karl Marx, in the context of systems and determinism. The question is perhaps how can political thought inform us about agent-based systems and their utility. Lucka certainly enjoyed bringing his academic background in social science into the AI realm for the talk.

When it comes to generative AI and agent-based systems we can layer structure onto agents to improve the feedback loop. One method for doing so is by improving the prompt, but Lucka argued that programming rather than prompting would lead to more stable, reliable AI systems.

A suggested reference from Lucka’s talk is ReAct: Synergizing Reasoning and Acting in Language Models.

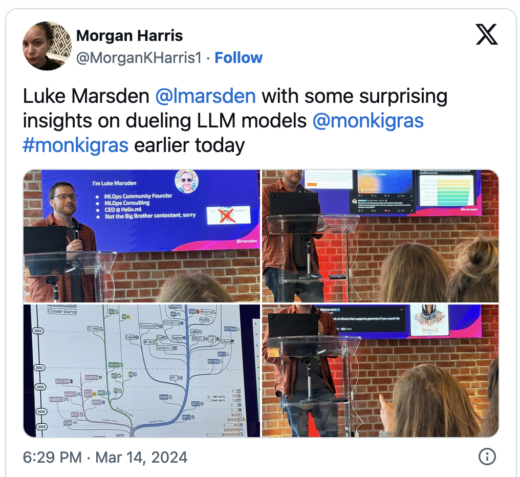

Where we are with Open LLMs by Luke Marsden

Marsden talked about the state of open vs. proprietary LLMs.

He shared data indicating the quality of output from open models is catching up with private models, especially following the release of Mistral-7B. He also had a “family tree of LLMs” slide that showed an interesting evolution of models over time. He also highlighted the Mixtral model.

Marsden’s talk was a great roundup of model evolution over time, and made it very clear that OpenAI isn’t the only game in town. Competition is growing, which will be great for the industry as a whole. If I had a teeny issue with the talk it would be that definitions of what is open, what is open source, what is an open model, were somewhat glossed over. The talk was good, but the industry as a whole really needs to grapple with what truly is open or not in this important frontiering area.

Brewing Collaboration: Empowering and Inclusive Generative AI by Farrah Campbell & Brooke Jamieson

Campbell and Jamieson drew parallels between the history of the computing industry and the brewing industry, talking about how these industries were once the province of women and how that changed over time. They discussed the potential impacts we may face when the people who use AI tools look very different than the people who make AI tools.

I also appreciated how they outlined their approach to prompting, including clarity of intent, context and background, prompt structure, iterative refinement, and task specificity.

(They also gave their entire talk with an alarm blaring outside, so extra props for that!)

Prompting Change by Rafe Colburn

Colburn discussed methods of prompting change within his teams as a person in a senior leadership position. A few of the methods he mentioned include:

- trickle down: working through your direct reports and getting the level of management below you to advocate for what you want to advocate for; this is a powerful but sometimes slow mechanism for change

- written word: in particular I liked his approach of posting some of his thought processes on LinkedIn instead of just internally; people take public writing more seriously than internal writing

- share the dashboards: are you transparent about what is it the leadership team cares about?

- hack week: this gives you a sense of what people will do left to their own devices; based on what people pick to work on, do you have a sense of whether your messages and priorities are organically sinking in?

Producing change requires humility. You have to reflect on why people are not changing and focus on listening.

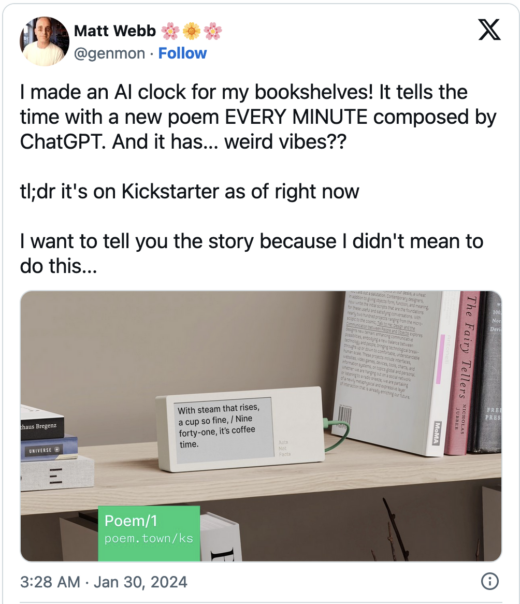

Acts not facts and AI clocks by Matt Webb

Webb presented his delightful project creating a clock that uses AI to write motivating poems about the time. This is a particularly interesting project because AI hallucinates and does not have a concept of time. Webb’s goal wasn’t necessarily to create a clock that tells you the correct time, but rather to play with AI in a way that felt imaginative and profound.

I also enjoyed his closing commentary about this project giving him a feel for the “vibes” of the LLM. Spending time with the poetry helped him better understand the voice of the output, and that’s something to keep in mind as we incorporate these tools into our world.

Stay tuned for a Day 2 recap coming shortly!

Disclaimer: Due to the nature of sponsorship as a concept, RedMonk has a commercial relationship with all the sponsors referenced above. 🙂 That said, no speaking spots at RedMonk events are ever available for sale; all speakers were invited on the merits of their proposals.

No Comments