As containers have emerged as an important technology over the last couple of years, a single thought keeps going through my mind – will the incumbents allow containers to disrupt their markets in the same way as virtualization disrupted operating system vendors previously?

Virtualization and Standardisation

It is useful to step back a bit and understand the disruption that virtualization brought about. The initial story and messaging around virtualization was all about consolidation, server reduction, cost reduction and so forth. But people quickly realised that the real benefit of virtualization was a layer of abstraction from hardware, which suddenly meant a developer could request, and be relatively assured of getting, the same environment at all times.

As with most useful technologies when you make them easier to use, via some form of packaging, user adoption will increase – something we at RedMonk have been arguing for a long time. When virtualization became established it increased, rather than decreased, the demand for compute capacity while allowing enterprise IT organisations to standardise on particular hardware.

This standardization also leads to much simpler and predictable capacity planning. This drives a large business benefit – from an accounting perspective budgeting becomes a lot easier – and there is no surer way for a large IT department to win their accountants hearts than with reasonably accurate spending predictions that can be allocated in easy quarterly chunks. If you have ever been involved in capex discussions, you will also know that nothing will cause more angst and annoyance to the finance folks than appearing a few weeks from the end of a financial reporting period with a request for extra funds.

The lure of containers

The explosion in interest in containers, and Docker in particular, is well documented. While Cloud Native companies have embraced Docker, the pace of enterprise adoption is, as you would expect, a bit slower, although at a far faster pace than anything else I have seen in recent times. However the sheer speed of developer adoption is forcing large IT organisations into supporting containers in production.

It became obvious, very early on, that they incumbents technology vendors had seen containers coming, and, wisely, rather than trying to prevent this trend they are rapidly getting onboard with the new world and developing various offerings around containers. The Open Containers Initiative will only strengthen this, and the core infrastructure around containers, as people generally view containers currently, will ultimately be subsumed into the operating system.

This of course leads us to operations and orchestration – anyone who has ever built an application with Docker across more than a few hosts will know that this can get complex and unwieldy very quickly. Over the last eighteen months, and in particular the last twelve months, we have seen an array of different solutions emerge. While still a nascent market, some clear product offerings are starting to emerge – in particular products built around Kubernetes, such as Tectonic from CoreOS and the latest version of OpenShift from RedHat.

We do need to keep in mind that not every organization is at the bleeding edge, and while developers may be using containers, the ops folks have a whole set of tooling in place that they will not want to just abandon.

On-Ramps for The Enterprise

Ops brings us back to virtualization, and for virtualization in a large chunk of the enterprise you can really read VMWare, Microsoft Hyper-V and Xen. When it comes to the enterprise the footprint of VMWare is really rather large.

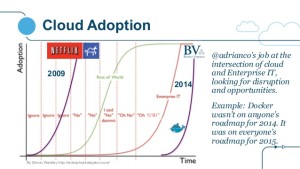

However most enterprise IT departments are just not very agile, as Adrian Cockcroft succinctly puts it in his infamous, viral, graph they are always late to the party

This is where the approach that both VMWare and Microsoft are taking is really quite interesting. In the VMWare world you now have an easy on-ramp via their integrated containers option in vSphere, and an opinionated version of the new world in the Photon Platform. Consider this an earlier invite to the hipster party than enterprise IT is used too. It is also an invite they can easily explain to their finance departments.

Now while many cloud natives may scoff at the vSphere approach, companies who have invested heavily in their infrastructure will view this as a much easier transition than retooling everywhere.

Hypervisors and Unikernels

The other common patterns we are seeing is the emergence of containers running much closer to, or directly on, the hypervisor. As Stephen has pointed out before the atomic unit of computing has become the application with containers.

Developers have already learned how compact and self contained their applications become with containers, which leads us to the question of how much can I remove – and this logically leads us to unikernels.

While still in early days, the pattern of running containers as close to the hypervisor as possible shows that the incumbents are already thinking about this. If, from the operations point of view, managing Unikernels is almost identical to managing containers or VMs, then the biggest barrier to enterprise adoption is removed.

Unikernels will then become a developer story, and we are already seeing a lot work to support JavaScript , Go and so forth in unikernel architectures. But, as always, packaging will be key. While some commercial offerings are emerging, unikernels are still, currently, largely an academic exercise. This will definitely change in the coming year.

And what does this mean for developers?

The most interesting longer-term question will be the approach that these enterprise companies take to developing their containerized applications. Developed correctly they should be eminently portable to other environments which support containers – giving developers significant choice.

Ultimately the on-ramp being created by the incumbents for large organisations will have two impacts – greater choice of how the underlying infrastructure is managed and portability of applications.

Disclosure: VMWare, RedHat, Microsoft and CoreOS are RedMonk customers.

No Comments